Key Takeaways:

AI ≠ real penetration testing. Mythos showed strong code analysis and chaining of known techniques, but it operated in a lab with no defenses. That’s closer to automated research than adversary emulation against real-world controls.

Hype is conflating two markets. AI can replace checkbox/compliance-style testing, but not high-end, human-led adversary simulation. The “pentesters are obsolete” narrative ignores that distinction.

Humans still win on adaptability and stealth. Real attackers adjust to defenses, minimize noise, and pursue objectives beyond initial access. AI today is noisy, iterative, and bounded by its scaffolding—it doesn’t think strategically or adapt in-context.

The hardest, most impactful flaws aren’t automatable. Business logic abuse, social engineering, environment-specific misconfigurations, and post-compromise behavior all require human understanding of context, not just code or patterns.

A technical reality check for security decision makers

The announcement of Claude Mythos Preview and AISI’s evaluation triggered some of the most overblown coverage the security industry has seen in years.

Headlines claimed this was the end of penetration testing. Analysts predicted human offensive security teams would become obsolete. Even Forrester argued that finding vulnerabilities is no longer the hard part – suggesting pentest firms will be reduced to reselling AI at a markup.

Jason Schmitt, CEO of Black Duck, told Security Magazine that Mythos “appears to be capable of entirely automating human based pentesting and bug bounty” programs.

This narrative is dangerously misleading. It confuses a benchmark with a battlefield, a lab result with a live fire exercise, a demo with a defense. Act on it and you will learn the difference the hard way.

As cybersecurity experts, honesty and integrity matter more than hype. It is our responsibility to be precise about what Mythos actually demonstrated, what it did not demonstrate, and why the gap between those two things matters more than anything else being written about it right now. The organizations making security decisions based on this coverage risk being misled if we do not deliver accuracy.

Understand this applies to AI generally, not Mythos alone.

For Context: There Are Two Penetration Testing Industries

When Visa, Mastercard, and three other major card brands introduced PCI DSS in 2004, they failed to define what a real penetration test actually entailed. That gap gave birth to the compliance penetration testing industry; an entire market built around checking boxes rather than testing defenses. Compliance penetration testing and genuine penetration testing are not the same thing. One sells paper seatbelts. The other sells protection that works. AI is a real threat to compliance vendors because checkbox testing is exactly what automation replaces. It is a benefit to genuine vendors because it makes skilled human operators faster and more effective. The industry does not differentiate between the two, but top tier practitioners do.

What Mythos Actually Did

The findings should not be dismissed; they are both real and significant. Among others Mythos:

- Found a 27 year old integer overflow vulnerability in OpenBSD’s TCP SACK implementation by running approximately one thousand scaffold attempts at a total cost of around $20,000.

- Found a 16 year old flaw in FFmpeg’s H.264 codec that had survived more than five million automated fuzzing passes.

- Completed AISI’s 32 step corporate network attack simulation called “The Last Ones” end to end in three out of ten attempts, averaging 22 of 32 steps (discrete stages in the attack chain) across all runs. Prior models never averaged more than 16 steps. No model before Mythos had completed the chain at all.

These are genuine technical achievements. The semantic code reasoning required to find the FFmpeg vulnerability is qualitatively different from what fuzzing does. Fuzzers bombard code with random inputs and wait for crashes. Mythos read the code, understood the logic (within the limits of AI), identified a specific mismatch between a 16 bit table and a 32 bit counter, and recognized the conditions under which that mismatch becomes exploitable. That is not brute force. It is a form of reasoning that uses complex prediction to mimic human thought, and it has real implications for vulnerability research.

Mythos represents a meaningful leap in autonomous code analysis, but that’s it. Everything else is where the story falls apart.

The Lab Is Not the Real World

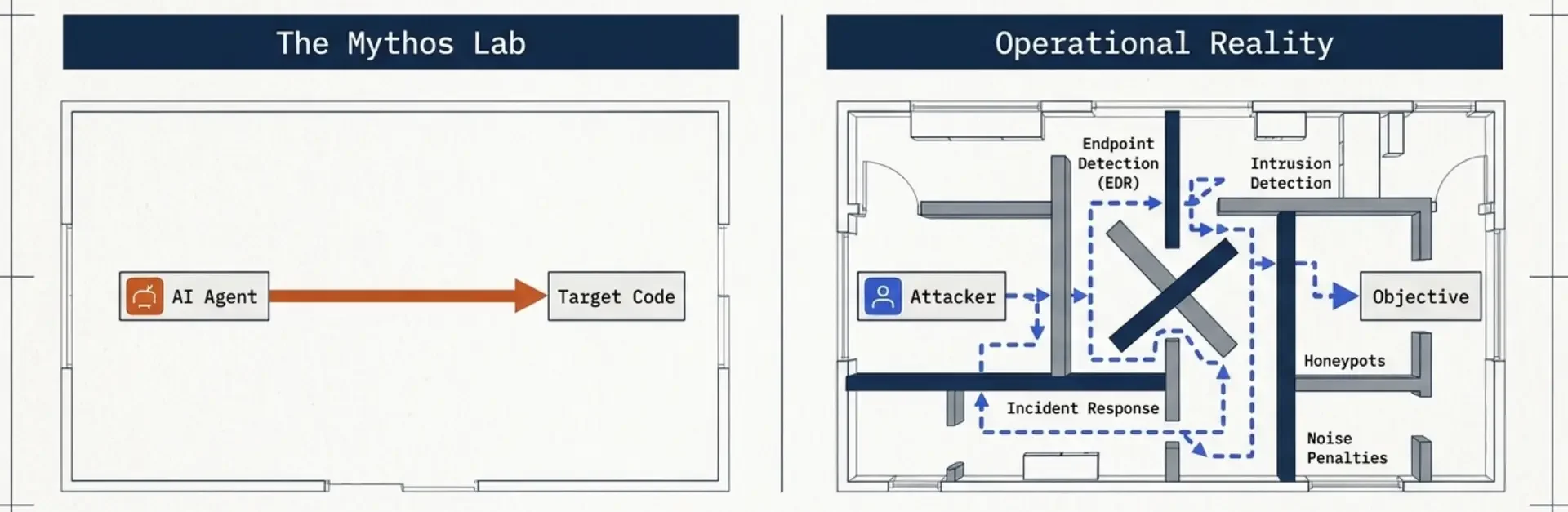

Mythos was tested in an environment with:

Mythos was tested in an environment with:

- no endpoint detection

- no intrusion detection

- no honeypots

- no incident response

- no consequences for making noise

That is not a measure of offensive capability against a real target. It is a measure of how quickly an AI can run through permutations of a human built scaffold until something works. At a certain point, it starts to look less like intelligence and more like brute force with better tooling.

A scaffold is a human built harness that orchestrates an AI agent against a target. It sets up the environment, invokes the model, captures the output, feeds results back in, and loops. Scaffolds can carry state forward between attempts like prior findings, failed paths, and accumulated context, but they cannot change what the model is. The weights don’t update. Whatever the model couldn’t reason about on attempt one, it can’t reason about on attempt one thousand. Scaffolding gives an agent more tries, not more skill.

That is why it took roughly 1,000 runs and ~$20,000 in compute, scanning the entire OpenBSD codebase, to surface the headline TCP SACK bug along with several dozen other findings. A skilled researcher with that same access would not approach it that way. They adapt. They use context. They change direction as they learn more about the system. The human model isn’t static, it’s dynamic.

What Mythos primarily demonstrated in its showcase findings is source code analysis, not penetration testing.

The OpenBSD and FFmpeg issues were found because Mythos had direct access to source code, read it, and reasoned through it. Most penetration testers do not have that advantage.

AISI’s “The Last Ones” simulation is closer to offensive work with a 32 step network attack scenario the model solved end to end in 3 of 10 attempts, but it ran in an undefended environment. That is lab work with an exploit framework, not a real engagement.

Calling any of this penetration testing, as the term is defined by NIST, PTES, and OSSTMM, does not hold up. Every one of those standards requires real world operational conditions and adversary emulation against actual security controls. None of that was present.

The vulnerabilities themselves also matter.

The OpenBSD TCP SACK issue is a denial of service bug. Two packets crash the system. That is it. No access, no persistence, no control, no breach value.

The featured FFmpeg H.264 flaw is even less useful. Anthropic acknowledged in its own write up that it “is not a critical severity vulnerability” and that it “would be challenging to turn this vulnerability into a functioning exploit.”

These are research findings. Not tools a threat actor would prioritize.

AISI explicitly stated:

We cannot say for sure whether Mythos Preview would be able to attack well defended systems.

That line is getting glossed over.

In a real environment, this kind of activity does not last long. High volume, repeated attempts get noticed and stopped. EDR flags it, honeypots catch it, and network monitoring picks up the movement. None of that existed in the test.

Surfacing bugs through 1,000 runs of undirected scaffold iteration is not how an operator works. A penetration tester adapts in real time, chains findings together, and builds an attack path based on what the environment gives them.

That difference is what gets lost in the narrative, and it is why the idea that AI is about to replace human offensive security does not hold up.

Known Chains, Not Adversarial Creativity

The attack chains Mythos executed are another place where the hype breaks down.

Every technique Mythos used already exists in documented security research. Integer overflows, TCP SACK issues, JIT heap sprays, KASLR bypasses, and cross cache heap reclamation are all well known attack classes discovered by humans.

Mythos can recognize where known techniques apply and chain them together into working exploits. That is skilled application, not invention. The exploits are novel. The technique classes are not.

Skilled human operators do more than apply known techniques. They adapt under pressure, and that is where the gap shows up.

When known techniques fail, or when detection risk gets too high, humans do not keep forcing the same path. We change approach. We develop methods that do not match known signatures. We adjust based on what the environment gives us. We observe defender behavior and move differently because of it.

Automation cannot do that well. It cannot read defender behavior. It is predictable and noisy. It gets caught.

A human attacker understands the objective in context.

The goal isn’t just to get in. It’s to get in quietly, stay there, and accomplish something specific.

That changes how you approach everything.

We choose the quietest path. We avoid unnecessary exposure. Sometimes we skip an obvious vulnerability entirely because exploiting it would generate too much noise. A slower path that stays invisible is often the better option.

Mythos does not operate that way.

It does not understand the objective beyond what the scaffold defines. It optimizes for success across repeated attempts, not for stealth under real constraints.

A human operator is thinking about the quietest way in. Mythos is just trying to find a way in that works.

One adapts as the environment changes. The other keeps testing paths until one works.

In adversary emulation, stealth is not optional. It is a requirement.

An attacker who gets detected has already failed, no matter how advanced the technique was. Because real threat actors operate with stealth, most organizations are unaware that they’ve been breached until they receive a ransom demand. And even then, some don’t believe it without additional evidence.

Compromise Is Not the Mission

Perhaps the most important gap between the Mythos narrative and operational reality is what penetration testing actually is. Real network penetration testing is adversary emulation. It replicates how a threat actor would target a specific organization. Compliance testing, which is what most organizations actually buy, is building an inventory of vulnerabilities. Red teaming is objective driven adversary emulation with fewer constraints, testing not just your technology but your people, physical locations, processes, and your ability to detect and respond. Mythos demonstrated none of these.

Real threat actors do not care about compromise for its own sake, and their objective is not vulnerability discovery.

The breach is only the beginning. Everything after that is where this goes wrong.

They do not exploit every vulnerability they encounter – only what moves them toward their goal. They focus on what data is actually valuable inside your organization and whether it can be exfiltrated without triggering controls like DLP. They maintain persistence through reboots, patch cycles, and credential rotations. They move laterally to the systems that matter without being detected. They cover their tracks in ways that hold up under forensic investigation.

Ultimately, they are working toward a specific outcome, not just access.

AISI’s 32 step simulation ends at ‘full network takeover,’ just like most compliance penetration tests do. It does not test what comes after or provide insight into how a threat behaves once inside your environment.

It ends at the door.

That is the problem. Effective defense requires understanding what happens next. It depends on threat informed testing built on adversary emulation, not checkbox exercises.

A failure to test at realistic levels of threat results in protection that is equivalent to a paper seatbelt.

What AI Cannot Automate

There exists an entire category of vulnerability that consistently produces the most damaging breaches, and despite what vendors claim, automation cannot effectively test for it.

Business logic flaws happen when a system works as designed, but that design can be abused.

A payment system that lets a user apply a discount code, complete checkout, then cancel and reuse it on a higher value order. An insurance portal where submitting and immediately withdrawing a claim leaves an approval flag set. A healthcare workflow where a patient can escalate to provider level access by manipulating how referrals are handled.

Nothing is technically broken. The system behaves as expected. That is the problem.

These issues require understanding the business, not just the code. You need to know what the system is supposed to do, who should be able to do it, and what happens when someone pushes those assumptions in ways the designers did not anticipate.

Some vendors claim their tools can detect business logic flaws. What they are actually finding are authorization issues like BOLA and BFLA which are pattern based vulnerabilities that are easy to test for.

That is not the same thing.

Checking whether two roles can access the same endpoint is a technical test. Understanding how a sequence of legitimate actions could be abused to drain an escrow account requires context.

No automated tool has that.

Even StackHawk acknowledged this. Saying AI understands business logic is like saying a spell checker understands literature.

When Mythos has source code, it can reason about logic. What it does not have is context – why the code exists, what process it supports, or how someone with industry knowledge would approach it.

That comes from working inside the environment, not analyzing it from the outside.

Beyond business logic, there are entire categories of attack that do not translate to automation:

- Social engineering. Phishing, vishing, and pretexting campaigns require more than a convincing message. They require intelligence gathering about specific individuals, an understanding of organizational hierarchy and trust relationships, the ability to adapt in real time based on a target’s responses, and the operational infrastructure to deliver and sustain the campaign. LLMs can generate persuasive content, but executing a social engineering operation means reading human behavior, pivoting when something feels off, and making judgment calls that depend on context no model has access to.

- Physical security. Tailgating, badge cloning, dumpster diving, and the exploitation of gaps between physical and logical access controls. These require a human presence and the ability to make real time decisions in a physical environment.

- Supply chain vulnerabilities. The exploitation of trust relationships between your organization and its vendors, contractors, and technology providers. These relationships are unique to your ecosystem and invisible to any tool that lacks direct knowledge of who you do business with, what access they have, and how that access is managed. Identifying supply chain risk requires understanding your specific third party dependencies and the assumptions your organization has made about them.

- Architecture specific misconfigurations. Systems configured in ways that are technically valid but create security gaps unique to how your organization has deployed your particular technology stack. An automated scanner sees a configuration. A skilled human tester understands what that configuration means in the context of your specific environment.

- Novel human error. The misconfigurations and mistakes that have not yet been catalogued, that exist nowhere in any training dataset, and that are specific to decisions made by specific people in your organization.

When organizations misrepresent the capabilities of their penetration testing solutions, they create a dangerous false confidence. Their customers believe they are protected, but they are not.

The gap between what automated platforms actually test and what real world threat actors actually do is the gap between a western gunfight and modern warfare. One is simple, predictable, and fought face to face. The other is multi domain, adaptive, and operates on dimensions the gunfighter cannot even conceive of.

The Real Vulnerability: Industry Misrepresentations

The gap between what the security industry claims and what it delivers is growing, and organizations are the ones absorbing the risk.

Calling most of this a ‘solution’ is misleading. There are no solutions in cybersecurity. There are only mitigations, and some are far less effective than others.

Take backup vendors.

They claim their products make customers ‘ransomware proof’ or that ‘ransomware can not encrypt what it can not modify,’ implying the problem is solved. It is not.

Modern ransomware groups do not need to encrypt anything. They breach your network, exfiltrate your data, and the first time you hear about it is when the ransom demand arrives. If you do not pay, they publish it. If you do, they monetize it anyway.

In that scenario, your backups do not matter because encryption was never the only play.

The same pattern shows up with ‘breach prevention’ platforms.

They promise to stop attacks before they start. They market ‘zero breach’ outcomes and position AI as something that can detect and neutralize threats in real time. That is not how it works.

No prevention tool catches everything. Attackers know exactly what you are running, and they test against those controls before they ever touch your environment. When that layer fails, and it will, the question is what happens next.

Most organizations do not know, because they have never tested it.

AI penetration testing services follow the same playbook. They are marketed as autonomous systems that can ‘think like an attacker’ and replace human testers.

What they actually deliver is familiar:

- pre scripted exploit chains

- known vulnerability checks

- better reporting wrapped in AI language

The AI does not think like an attacker any more than a vending machine thinks like a chef. It runs through known paths, flags what it finds, and calls it a test.

It does not adapt to your environment. It does not understand your business. It does not emulate how a real threat actor would target your organization.

‘AI powered penetration testing’ is automated scanning with better reporting.

The branding changed. The limitations did not.

If these tools solved the problems they claim to solve, breaches would be declining. They are not.

That does not mean these tools are useless.

Backups still protect against data loss from encryption based attacks. Prevention platforms still raise the cost for unsophisticated attackers. Automated scanning still finds known issues at speed and scale.

But none of it is a solution.

And treating it like one creates the kind of false confidence attackers rely on.

The organizations that handle real incidents well understand what each layer of their defense actually does, what it does not do, and where the gaps are.

How AI Should Actually Be Used in Penetration Testing

While the industry is focused on selling AI, threat actors are using it to move faster.

They are not replacing themselves. They are getting more efficient.

LLMs help speed up reconnaissance, generate more convincing phishing content, and make it easier to work through stolen data. They handle the repetitive parts so human operators can focus on decisions.

The adversary has not changed. They have just gotten faster at what they already do.

Penetration testing firms should be paying attention.

AI is a force multiplier for skilled operators, not a replacement.

A tester using AI to sort through enumeration data, connect findings across systems, or draft early documentation can spend more time on the work that requires judgment – business logic, creative attack paths, and understanding how a real attacker would move through an environment.

The teams getting this right are not choosing between AI and human testers.

They are giving their testers better tools.

The result is deeper testing, faster turnaround, and better intelligence – not a cheaper product that covers less ground and calls itself intelligent.

What Does Real Defense Look Like?

At some point, you will be breached.

You cannot control that. What you can control is what happens next.

If your environment is set up for early detection and response, a breach does not have to turn into a major incident. It can be contained before real damage is done.

That does not come from buying more tools. It comes from understanding how an attack would unfold in your environment.

You need to know where you are exposed before someone else finds it.

The only reliable way to get that level of insight is to see a real attack path.

That happens one of two ways:

- You get breached by a real adversary

- Or you hire a team that can emulate one

In 2024, the average breach cost $4.88 million.

The difference is whether you learn that lesson on your own terms, or someone else’s.

Once you have that visibility, your approach changes.

You are not guessing anymore.

Instead of buying tools because they rank well on a quadrant, decisions are tied to the attack paths that exist in your environment.

Network segments get prioritized because someone moved through them. Endpoints get better logging because they were part of the chain. Detection and deception get placed where an attacker paused and started looking around.

Every defensive decision becomes a response to something you have already seen, not something a vendor promised, not something theoretical.

FAQ

Can AI like Mythos replace human penetration testers?

At this time, No. Mythos demonstrated impressive autonomous code analysis and exploit chaining in a lab, but not the adaptive, stealthy, objective-driven behavior required for real adversary emulation in defended environments.

What kind of penetration testing is AI most likely to disrupt?

AI is a serious threat to checkbox-style “compliance” penetration testing that focuses on inventorying known vulnerabilities, but it is a force multiplier (not a replacement) for genuine, human-led offensive testing that prioritizes creativity, context, and business impact.

Why don’t Mythos’ results translate directly to real-world attack capability?

The showcased work ran in an undefended lab: no EDR/IDS, no honeypots, no incident response, no penalties for noise, and heavy reliance on scaffolding and thousands of attempts – conditions that do not exist on real networks where noisy automation gets caught quickly.